The Ordeal of Standard English

On political correctness as a pseudomorphic attempt at new cultural diglossia

I.

Language serves a number of different functions, and one of them is to establish insiders and outsiders. It isn’t merely a tool for communication but also a tool to determine who isn’t able to communicate, and this distinction is rich with social and political implications. Language is bound up with identity. Recall the Bible story about the word “shibboleth,” in which the Gileadites slaughtered everyone trying to enter their territory who couldn’t pronounce it right, ultimately allowing them to win their war against the Ephraimites. This kind of linguistic exclusivism might not always be so extreme, but it has occurred throughout history and at all levels of social stratification.

The exclusionary function of language has always been particularly important for the effective transmission of privileged knowledge from generation to generation. Keeping a communication system confined to a small group of elite insiders has been essential for the memorization and, eventually, written canonization of hymns, laws, myths, rituals, and scientific information. The reason being, if commoners were able to control the transmission of these things, the information would become diluted and imprecise. When literacy was invented, writing was taught to a small number of people rather than everyone for this reason. And even when literacy grew more widespread in the ancient world, the use of different writing systems could demarcate one’s social position. For instance, the ancient Egyptians had three kinds of written script; hieroglyphics was the most difficult and exclusive, hieratic script was a bit easier, and they eventually created demotic script, which was the easiest to learn and thus more widely taught and used.

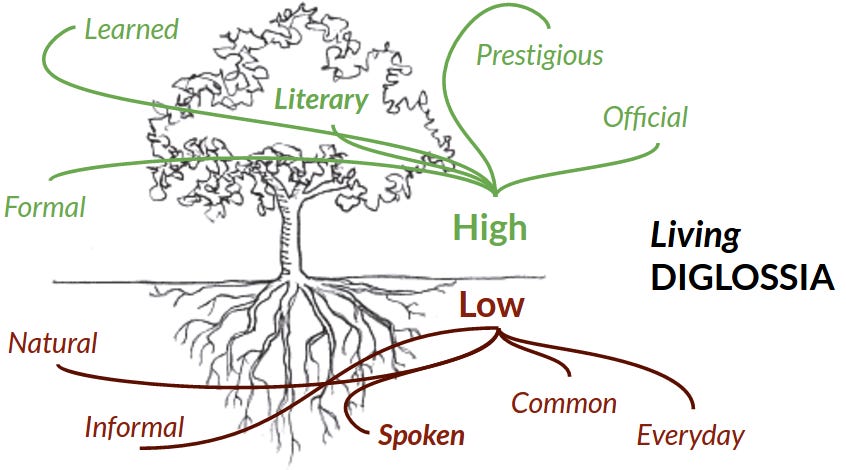

As some ancient civilizations grow more advanced, they enter into a state of cultural diglossia, or “two tongues,” a condition in which elite cultural knowledge is kept exclusive from common knowledge by being preserved in a separate language reserved for its educated class. Under such a condition, the inhabitants of the lower classes speak a vernacular language during their day-to-day lives under no assumption that the ideas they express will have a profound effect on society at large, while the output of the elite language forms the vessel through which long-lasting societal principles are established. These are the principles that subtend the values, myths, epistemology, metaphysics, religious beliefs, and codes of honor belonging to the entire civilization, including all the commoners who receive those ideas from above.

Cultural diglossia has often occurred as an innovation within ancient civilizations once a “classical” version of the language is established and grows increasingly distinct from the other regional vernacular languages it oversees. This was indeed what happened during the Kassite period in Mesopotamia (1550-1150 BC) and the Ramessid period in Egypt (1300-1100 BC). A weak diglossic condition still persists for Arabic, with Modern Standard Arabic (formerly “Classical Arabic”) as the culturally elite version of the language (it’s almost like the equivalent of Shakespearean English) overseeing a whole bunch of nonstandard Arabic dialects, some of which are not mutually intelligible. The European Middle Ages were also culturally diglossic, with the nobles and clergy being trained in Latin and overseeing a number of regional vernaculars (like English) that would only establish themselves as culturally legitimate later on.

II.

Now, with that preamble out of the way, let me get to the main point of this discussion.

For some time, a phenomenon has befuddled conservatives. It started in the 1970s with feminist attempts to reform the English language, it receded a bit, it came back and grew stronger in the 1990s during what people were calling “political correctness,” it receded a bit, and then it started up again and grew even stronger throughout the 2010s in what people are now calling “wokeness.” This phenomenon has amounted to a left-wing campaign to change standard English to reflect left-wing values. One reason we know that it has befuddled conservatives is that they insist on using different terms to describe what has essentially been the same process. And whenever conservative intellectuals attempt to identify the underlying principle beneath these surges in left-wing attempts to control language, they typically enter into a separate realm of abstract thinking and blame the situation on nebulous concepts, most of which they define vaguely and treat ahistorically, like “Gnosticism” or “nominalism.” As a result, many conservatives are not even aware that some of today’s most obnoxious language reforms and oft-used jargon terms were first proposed five decades ago.

I’d like to propose a simpler explanation by focusing exclusively on the linguistic aspect of these surges that have been occurring every other decade. I believe that the political left has been blindly groping its way towards the resurgence of strong cultural diglossia, the kind one encounters when studying the ancient world, with themselves at the helm, in control of newly-fashioned English standards that reflect their own prejudices and beliefs. Because their proposed standards are distinctly ideological in nature, achieving their goals would give them the built-in advantage of warding off intellectual adversaries who want to participate in the discourse of power but don’t want to contort their language in such a way as to pay fealty to those who rule over it.

In the discussion that follows, I’ll try to historically contextualize the situation these leftists have inherited and demonstrate why what they’re doing is difficult, slow-going, and often accompanied by self-deception.

III.

Cultural diglossia requires your elite language to be preserved through a highly prescriptive set of standards and practices, meaning that educating someone in that language compels the educator to make hard, precise distinctions between right and wrong usage. It couldn’t be otherwise. The elite language has to be well-trimmed and maintained, like a bonsai tree. It can’t be wild and unkempt, like a Chia pet.

One major historic example of a language reform campaign that illustrates this point is The Carolingian Renaissance, which happened in the 8th century A.D. because the medieval clergy needed to get better at Latin. Latin documents were becoming unreadable to church authorities who lived in different areas from where the documents were produced. The Latin language was splintering off into different directions, taking on influences from the vernacular romantic tongues of Italy, France, and Spain, with these new dialects becoming mutually illegible.

Additionally, the clergy was poorly trained in it. There had always been (and always would be) parish friars who didn’t really know anything and just memorized certain formulae to get the job done, but there were also way too many priests who were regularly botching their Latin when they should’ve known better. One of the better-known examples of this situation causing problems is in the dispute between St. Boniface and Virgilius. Around 745 AD, Boniface learned of a priest who didn’t know Latin and baptized someone with the statement, “Baptizo te in nomine patriae, filia, et spiritus sancti,” which literally means, “I baptize you in the name of the country, in the daughter, and of the holy spirit.” Boniface, who had jurisdiction over the area, wanted everyone who was baptized by this priest to be re-baptized by a priest who could get it right, but the local church authorities felt this wasn’t necessary. Mistakes like that apparently happened all the time.

So the Carolingian Renaissance was a large-scale attempt to standardize the Latin language and make its spelling, syntax, and pronunciation all conform to an official set of rules. All of a sudden, priests were instructed to read all kinds of Latin books, including even secular works like Ovid and Virgil, just to get better at the language, and they were rigorously disciplined in their linguistic habits, with newly heightened expectations that they had to meet. And all in all, this massive linguistic overhaul worked pretty well. Of course, local documents from all over Europe (like title deeds, trade agreements, inventories, and so on) would still have tons of regional irregularities, and indeed, such irregularities were so common that they’re now taken as a good indication that a historical document from that time is not a forgery. But the church authorities surely recognized that even though a heavily-used language cannot be completely frozen in place, one can still keep it more or less under control.

Nowadays, however, Latin really is frozen in place, no innovations ever occur within it, and that’s because no one actually uses Latin to produce original texts — except perhaps the occasional dissertation at the pontifical universities. People make translations of the Harry Potter books and that sort of thing, and those who want to study Latin can read those as learning aids, but no one really uses Latin.

A paradox begins to emerge: a language can only reach a state of perfect, immaculate standardization when it is dead. And yet, for political organizations who need their subjects to communicate according to a common set of rules, this perfect standardization, corpse-like in its immobility, has nevertheless historically been the north star.

IV.

This is the central paradox that drives the debate concerning prescriptive grammar, or “prescriptivism.” On the one hand, linguists, who are instructed to analyze language as a subject of inquiry, do not want to make value judgments on what the proper language ought to look like. They feel strongly that the whole nature of language is to continually evolve, and therefore trying to freeze it with a set of fixed rules to determine its “properness” is counter-propositional to what language essentially is.

But the reason no one saw Latin as an “evolving language” during the middle ages is that it was meant to be a language for writing, and writing is “fixed” by its very nature, inscribed there on a physical object (for as long as that object remains) even as it pertains to matters that reside somewhere off in the ether. There was, in truth, a kind of “magic” to writing. In my short discussion on the French occultist Joséphin Péladan, I cite some scholarship that discusses Anglo-Saxon healing charms that use writing in magical ways:

In early medieval English healing texts (see Bald’s Leechbook), there are quite a few charms in which the infirm writes something down and either eats it (by writing it into food) or burns it. According to this way of thinking, the written text, with its abstract words, essentially carries the power to transform the physical object, either the food or the sick person’s body, and the moment of their destruction is when this somatic effect supposedly occurs.

A physical body is temporal and ever-changing, but a written concept remains forever, so even when one destroys the object that houses those words, their semantic power transfers onto something else. That is the kind of logic we’re dealing with here, and it’s the same logic that prevented people from seeing Latin as a language that needed to “evolve” in the same way a common vernacular might.

V.

That sensibility went away when the printing press was invented, which helped to demystify the written word. The ability to distribute books in large quantities meant that more texts could be translated into the vernacular, and sure enough, the vernacular languages of various European countries eventually displaced Latin. Essentially, the printing press meant that nation states could become secularized, and the growth of literacy combined with the widespread availability of vernacular books (like freshly translated Bibles) meant that people could be broadly unified on the basis of a shared language. Printing press entrepreneurs like Johannes Gutenberg and William Caxton paved the road for nationalism. And although the word “nationalism” today is associated with “archaic” or “superstitious” ideas like the throne and altar, or blood and soil, this development was instead both relatively secular and non-ethnocentric compared to what came before. Literacy and the printing press expanded the possibility of national cohesion from small tribes to vast, sprawling territories.

For that reason, vernacular languages started to become standardized, and the process began pretty early. It is also rather clear that when these vernacular languages were being standardized, the people in charge of that process understood that they were engaged in a weighty undertaking. Consider English. From the 15th to the 17th centuries, various English scholars started to reform its spelling so that it would start to resemble Latin a bit more. The words “doubt,” “debt,” and “receipt” did not always have the silent Bs and Ps in them, and “adventure” didn’t always have a D. In Middle English through the 14th century, they were spelled something like doutte, dette, recete, and aventure (of course the exact spellings would vary based on the local dialect and the individual author). These were words that came from Old French and had been part of the English vocabulary since the Norman Conquest of 1066. But then, spurred on by the new information available with the printing press, various members of the clerisy realized that the French themselves got those words from Latin, so they figured, “Why not make our English words look more like Latin and less like French?” and the changes began.

When linguistic historians explain these spelling changes, they usually do so with an air of contempt directed at the English clerisy for needlessly meddling with the language. This attitude is a hangover from Ferdinand de Saussure and his hostility towards people who try to change the language by altering its spelling, when languages supposedly ought to change only through oral developments (with the spelling merely being a reflection of the spoken word). For example, here’s an amusing video on YouTube in which a guy says that the intrusive Bs and Ps were placed in there by “irritating, interfering” Middle English and Early Modern scholars who inserted “completely unnecessary letters into perfectly well-spelt English words.” Of course, he doesn’t mention a tiny little thing called the Hundred Years War, which might have given these scholars an added political incentive to get the hell away from French spelling. But additionally, these spelling reformers understood on some level that the English tongue was being elevated as a cultural force to an unprecedented degree, and therefore they were carrying a great weight on their shoulders. English was to inherit all the prestige and dignity of both classical and medieval civilization, and so these intrusive letters carried with them some symbolic importance.

By the time the enlightenment came along and every aspect of the English language was now being standardized, right down to its syntax and punctuation, Latin was de-emphasized all across Europe. English, French, Italian, Spanish, German, and other languages limited their dialectal variation in order to be commonly spoken in newly unified nation-states, and therefore each language could now have its own body of dignified, serious literature and maybe even philosophy. The concept of “national character” became a possibility for the first time. But all of this came at the expense of a pan-European clerisy who all wrote in the same language. And most importantly of all, the “magic” of writing was completely gone, while the magic of language in general (e.g. the belief in taboo words, ritual incantations, etc.) was on the verge of being jeopardized.

VI.

What the time of the enlightenment meant was that something like a “reading public” was now fully possible, but this new situation was not necessarily as egalitarian as one might think. Remember what I said at the outset of this essay: language always carries an exclusionary function, because exclusion is necessary for the refinement and preservation of delicate thinking. So, while the printing press meant that more people could read books than ever before, and while it also meant that each country’s vernacular language could gain prestige, it’s important to remember that the prestige of each language was only gained within the long shadow that Latin had cast over Europe. This is why the various literary figures who became “the father of literature” for their respective countries all showed great familiarity not only with Latin literature but also classical rhetoric, another highly prescriptive art form. I am thinking of guys like Chaucer, Dante, Goethe, and Francois de Villon. Amateur writers who would pay printing companies to publish their works could prove their worth as serious intellectuals, but this only happened rarely. When it did, it was by showing that they had a great wealth of such education.

While the “strong” diglossia of Latin vs. vernacular had fallen away, each country’s national grammar had reached a state of “weak” diglossia (I’m borrowing the “strong vs. weak” distinction from this blog post). Strong diglossia means that there are two distinctly different, mutually unintelligible languages. Weak diglossia means that there’s one proper standard version for how the language ought to be spoken, while the other nonstandard dialects are perceived as improper or, at best, “colloquial” variations. So even if you wanted to enter into a discourse that could have an effect on the development of society, you would still need to adhere to a set of rules, ones that you might not necessarily have been reared with as a child.

For these reasons, the “reading public” during the enlightenment — which included not just people who could read but also those who wrote their own books — was still quite small. It mostly consisted of the elites and the rising, educated bourgeoisie. One also gets the sense that they themselves realized they had to remain limited, hence the creation of bourgeois secret societies like the Illuminati of Bavaria and the Freemasons. These organizations promoted the use of reason and logic to create a universal brotherhood of man, and yet they had to promote all of this while cordoning themselves off from the rest of the world in exclusive and rigidly hierarchical old boys’ clubs. Although Jürgen Habermas went to great lengths to deny their insidiousness in his Structural Transformation of the Public Sphere (because, after all, they’ve captured the imaginations of so many crazy-eyed conspiracy theorists), he nevertheless failed to address adequately the basic hypocrisy at play here. Same goes for Leo Strauss and his “esoteric” liberal reading of Lessing’s Ernst and Falk: Dialogues for Freemasons (1778-80). That whole topic is a bit too far afield of what I’m trying to discuss, though, so perhaps I’ll return to it in a later essay.

VII.

Let’s fast forward a bit through history. Since World War II, there has been one international language of commerce, and that is English. For obvious political reasons, English has taken over everything. In a sense, it has taken on the role that Latin once did in that it has become the (semi-)official language of all educated people in the western hemisphere, and even much of the east. However, it is different in a key way: just about everybody learns it, and the numbers have only increased since the 1990s when Communism was no longer a factor in both Europe and most of Latin America. Latin in the medieval period, by contrast, was only known by a small fraction of the total European population.

Additionally, a major development swept throughout the world, namely the revolution in electronic media, something extensively discussed by figures like Marshall McLuhan and other media ecologists. Whereas the age of print had the effect of unifying nations through the solitary, introspective practice of reading and writing done by a relatively small segment of each country’s population, the advent of electronic media meant that all information is now potentially for everybody, and it’s truly public (i.e. available to everyone) in a way that writing simply is not. Rather than unifying the world further, electronic media have instead re-tribalized the industrialized world and re-mystified language, fully restoring its exclusionary function in ways not seen since ancient times. Consequently, there have been two contrary schools of thought at play among intellectuals regarding the subject of language during the last several decades.

The first school of thought owes itself to the legacy of the enlightenment, and its foremost representation has been Chomskyan linguistics. It is the scientific view of language and has thus strived for its complete demystification. In this mode of thought, a message is to be decoupled from the vehicle through which it’s presented — in other words, grammar and semantics are entirely separate things. In order to justify this perspective, notions like “inner speech” or “mentalese” must be posited because otherwise thought would depend upon language, and that would mean that syntax to some extent determines the content of a message. The reason I view this school of thought as essentially enlightenment-based is that has strong elements of liberal anti-authoritarianism embedded in the theory. In the same way that the enlighteners felt mistrust toward theocratic monarchy and everything that accompanied it such as the regal ornamentation and pompous trappings of the aristocrats, the linguists feel similar contempt for the self-importance of the grammarian pedants, sticklers, and assorted language mavens — or, in a word, the prescriptivists. The collective mnemotechnic and sociopolitical value of cultural diglossia, whether strong or weak, utterly escapes them.

At their most charming, the linguists show a healthy skepticism towards globalization and the total dominance of English over every corner of the world, which manifests their concerns regarding dying languages and dialects in various primitive tribal communities, countryside villages, and urban enclaves. But similar contradictions characteristic of the enlightenment apply to these linguists all the same. Linguists, as stated above, dislike prescriptive grammar, and yet none of them seriously expect nonstandard dialects of English ever to enter into the discourse of power. They have generally promoted pedagogical methods that seek symbolically to heighten the value of regional dialects in both primary and secondary education, but they all do so while speaking perfectly Standard English.

A good example is found in their controversial endorsement in 1996 of the Oakland, California school board’s decision to let their teachers instruct their students in “Ebonics.” The linguists saw Ebonics as a second language worthy of equal treatment as English. Yet not a single one of them spoke Ebonics in their professional lives or believed that a Harvard doctoral dissertation could ever be written in it. And even in their own field, research had been indicating that the best way to ensure mastery over a language is through full immersion into it (see also the controversy surrounding Ron Unz and bilingual education in 1997). The contradiction, much like the central one of the enlightenment, is best explained by the dogged refusal to acknowledge that language carries an exclusionary function, and the goal of education is partly to teach people how to include themselves in privileged discourse. If they could only come to terms with that brute fact, maybe they would realize that deliberately teaching large groups of people in a deprivileged language is not the best way to empower them.

One last word on the linguists before we move onto the second school of thought concerning language. Although the linguists hold the pretense of scientific veracity and have done so for some time, their world has been epistemologically imploding over the last three decades, as we are growing more aware of the fact that we really have no clue as to how the hell language really works. Chomsky’s theory of universal grammar, the cornerstone that legitimized linguistics as a legitimate field of social science, has taken a brutal beating from which it is likely never to recover. And although I personally think some theories on language’s inner workings are more accurate than others, there doesn’t seem to be a strong consensus among intellectuals and philosophers. Chomskyan linguistics is best understood as a late articulation of the enlightenment’s mythology, and it has been resting upon foundations that appear shakier and shakier after each passing decade. This is a problem that the following group of intellectuals has been able to take advantage of.

The second school of thought concerning language is an expression of its re-mystification that the electronic media revolution has engendered. It is anti-liberal, anti-enlightenment, left-wing, non-Marxist, and aggressively pro-identitarian for every group besides heterosexual white men. This school of thought sees language as possessing great symbolic importance, much as the medieval clerisy did, and it recognizes that whoever can set the parameters for what constitutes proper usage indeed possesses some serious power, while the parameters they set can in turn reinforce that power. That is why they aggressively have sought to reform what constitutes Standard English since the 1970s, first through feminism, and then on behalf of racial and sexual minority groups. This school of thought, though its adherents comprise a multiracial group of affluent strivers and status-seekers, nonetheless takes its ideas from the bottom rungs of the social ladder rather than the highfalutin prose stylings of the great western philosophers. They might see, for instance, that inner-city black youths say the word “nigga” on a regular basis and yet — as if claiming possession over it — they feel outraged when whites say the same word, even with benign intent. These leftists will then undertake the project of rationalizing this outraged behavior that they observe.

Such a bottom-up approach is why, at once, the adherents to this school of thought articulate their views on how language actually works as a scientific phenomenon rather poorly (that is, if they bother to articulate an underlying theory at all), while at the same time they also betray a more realistic/pragmatic assessment of how language actually functions as a tool of power. This school of thought has published work within the field of linguistics, and it has sometimes been an odd fit. For instance, I recently reviewed Deborah Cameron’s Verbal Hygiene (1995), which tries to attack the prescriptivism of British Thatcherites in the 80s while also endorsing the prescriptivism of political correctness and its advocates. The book attempts to show why holding both stances is not in fact hypocritical, but its argumentation is muddled and lazy. However, at the very least it implies an understanding of language at odds with both Saussurean structuralism and Chomskyan mentalism, one that is probably more realistic regarding political power despite having no theoretical foundation whatsoever. That it is considered an important and notable work within its field today says a lot about the strength of the ideology behind it. The book also stands a good example of how self-deceptive the discourse surrounding identitarian left-wing prescriptivism tends to be. Cameron frequently brushes up against the admission that political correctness is ultimately, when stripped bare of its pretensions, a naked power grab, and yet she always somehow evades that conclusion at the last minute.

VIII.

Given the trajectory over the past five decades, it is hard to avoid the conclusion that the left is stumbling its way into the creation of a new diglossic arrangement in which they oversee the maintenance of a fully developed, ideologically loaded Standard English. This process has not been easy to identify, however, because they themselves do not seem to realize that this is what they’re doing. Political correctness and/or “woke” language (not to mention critical theory jargon) is becoming increasingly ingrained into elite discourse, but its progress has not been straightforward. We see constant attempts from the left to establish a new baseline of linguistic expectations, which might perhaps work as a scaffolding upon which to create further ones. But for the most part, the left has pushed towards its unseen destination in fits and starts, making progress in a two-steps-forward, one-step-back pattern.

Moreover, many of their proposed ideas have failed outright (e.g. changing “woman” to “womyn”), while some have been initially successful but then stopped being used so widely (e.g. the preference for “African-American” to describe American black people). Others are meant to empower a given minority group while being wildly unpopular among that same minority group, and still others have failed at first but now are slowly becoming the universal standard (e.g. concerning races, I first encountered the practice of capitalizing “Black” while leaving “white” uncapitalized in a book from 1977. It is now, as of 2020, mandatory at the New York Times).

I believe that this state of confusion is because the identitarian left still currently expresses itself in a state of pseudomorphosis, a term I’m borrowing from Oswald Spengler’s Decline of the West. Pseudomorphosis is a condition in which a burgeoning new culture has to assume a warped shape because it is still stuck within the mores and expectations of the previous culture that it wants to supersede. Because of this older yet dominant culture whose ideas still loom heavily over the landscape, the new one cannot even adequately develop its own self-consciousness. And here, of course, the old culture is liberalism. Although wealthy milquetoast coastal liberals often willingly bend the knee to the identitarian left, it is liberalism that has nonetheless established the culture through which these leftists have no choice but to navigate.

It is also largely through the language and values of liberalism that the identitarian left still justifies itself, particularly in its pedagogy, but also in the stated reasoning behind its various language proposals. It is common to hear intellectuals promote identitarian leftist initiatives to the unwashed masses using appeals to fairness, civility, accuracy in speech, and various ideas found in cherished liberal documents like the Declaration of Independence. Many of these identitarian leftists even waver back and forth between these moral polarities within their own personal lives, just as many liberal elites are willing to give them the benefit of the doubt despite expressing misgivings when speaking privately among themselves. Because the representatives of these ideologies occupy the same offices and universities and live in the same neighborhoods, they must work and socialize with one another, so they form an uneasy but generally stable arrangement. Yet it seems increasingly clear that the identitarian left is ruthless in its thirst for power in ways that the liberals simply are not, and they are only now starting to imply a distinct cultural form that might perhaps develop in the future once the liberals have all died or been displaced.

One question that remains unanswered is whether or not the identitarian left will actually complete the task of constructing a stable, concrete, markedly different Standard English in which the rules are understood as permanent. It is not certain that this ever will happen, as intellectuals are all too aware of the paradox I discussed in Part III. This uncertainty is partly why, in a previous attempt to analyze the current situation, I’ve argued that critical theory jargon and political correctness amount to a meta-diglossic arrangement in which the difficulty of mastering the new Standard English is partly because it fluctuates so often, requiring constant attention to every new development in the language. In other words, the “bug” of constant change might instead be a “feature.” Identitarian leftists themselves will also sometimes justify themselves by arguing that there should be no standard mono-language (even as they’re knowingly proposing new standards to be enforced from above) and that all language is in a constant state of evolution, as if to ask, “So then, why not allow the language to evolve in the direction that we’re demanding?” But I’m inclined to see their own justifications as signs of self-deception as they appeal to the beliefs of liberal linguists rather than their own ideas. After all, many of the new language rules of today were proposed in the 1970s, which suggests that some of their ideas about proper linguistic articulation indeed have some shelf life.

I’ll end with this brief observation: whatever winds up happening in the future, it is rather clear that the beliefs of mainstream linguists have been powerless to stop the arrival of this newly developing diglossic arrangement. The belief that language is always evolving has enabled the identitarian left to make demands upon the direction into which it should evolve. And when linguists insist that the words themselves don’t really matter so much as the idea that informs them, the identitarians ask, “So, what’s the problem with us just changing the word if it doesn’t matter?” to which the linguists don’t have a good response. It would therefore seem that the entire field of linguistics has been rather easy to exploit. And more importantly, it lacks a valid foundational understanding of language and its relationship to political power. The magical view seems to be more realistic than the enlightened one.

Maybe the most important thing I’ve read since Dr. Land’s compact essays. Fascinating material, keep it up

Because you wrote this, I have no need to get a PhD and write a thesis (in fact, I quit my PhD and started studying ancient languages while working a normal day job).